Learner engagement does not mean entertainment. It means giving people a clear reason to participate, enough structure to keep going, and practice that connects the course to real work. This guide covers 12 strategies to help improve student engagement for corporate training, higher education, and online learning programs, with examples from organizations that tracked the results.

Years ago, teams measured engagement by attendance. Now, self-paced eLearning and distributed teams have changed the standard. Barkley and Major describe engagement as the link between motivation and active learning: why learners start, and what they do to build skills.

The gap shows up in the data. LinkedIn’s Workplace Learning Report 2025 says 89% of L&D professionals believe proactive skill-building will help companies prepare for changes at work. Yet average completion rates in self-paced corporate training still sit below 20%. Interactive LMS modules, gamified assessments, real-time feedback, and peer collaboration give teams practical ways to close that gap and improve knowledge transfer.

“Engagement is the secret key to learning. When students actively engage or participate to pursue knowledge, they are actually preparing for themselves a better life ahead.” — Tom Dewing, Ho Ho Kus Associate and consultant for Silver Strong.

Challenges in Improving Learner Engagement

Understanding the obstacles is the starting point for designing around them. These are the four engagement challenges that L&D teams and eLearning platforms encounter most consistently.

Low Completion in Self-Paced Programs

Self-paced learning gives people control. It also removes deadlines, facilitator nudges, and peer pressure. Voluntary programs often fall below 15% completion. Mandatory ones can drop below 50%. Long courses, weak role fit, and silent learner drop-off usually cause the damage. Teams fix this by shortening paths, cutting friction, and adding timely check-ins.

Remote Learner Engagement

Remote learners work across locations, time zones, and devices. The hallway chat is gone. So is the shared classroom rhythm. Online programs need peer review, cohort progress views, clear discussion windows, and useful reminders. Otherwise, learners drift.

Content Relevance

Learners leave when content feels far from their work. Think generic compliance cases, outdated product training, or leadership examples from the wrong industry. Modular content helps teams update one unit, scenario, or quiz instead of rebuilding the full course.

Measuring Engagement ROI

Completion shows that someone reached the end. It does not show skill use. Stakeholders want proof that training changed work performance. Without analytics, L&D teams report activity and struggle to defend the budget.

“By weaving in project-based collaborative learning tasks or tossing in real career scenarios, you give students a reason to care, making lessons feel less like chores and more like stepping stones to something they want to do.” — Peter Koblyakov, Co-Founder, Raccoon Gang

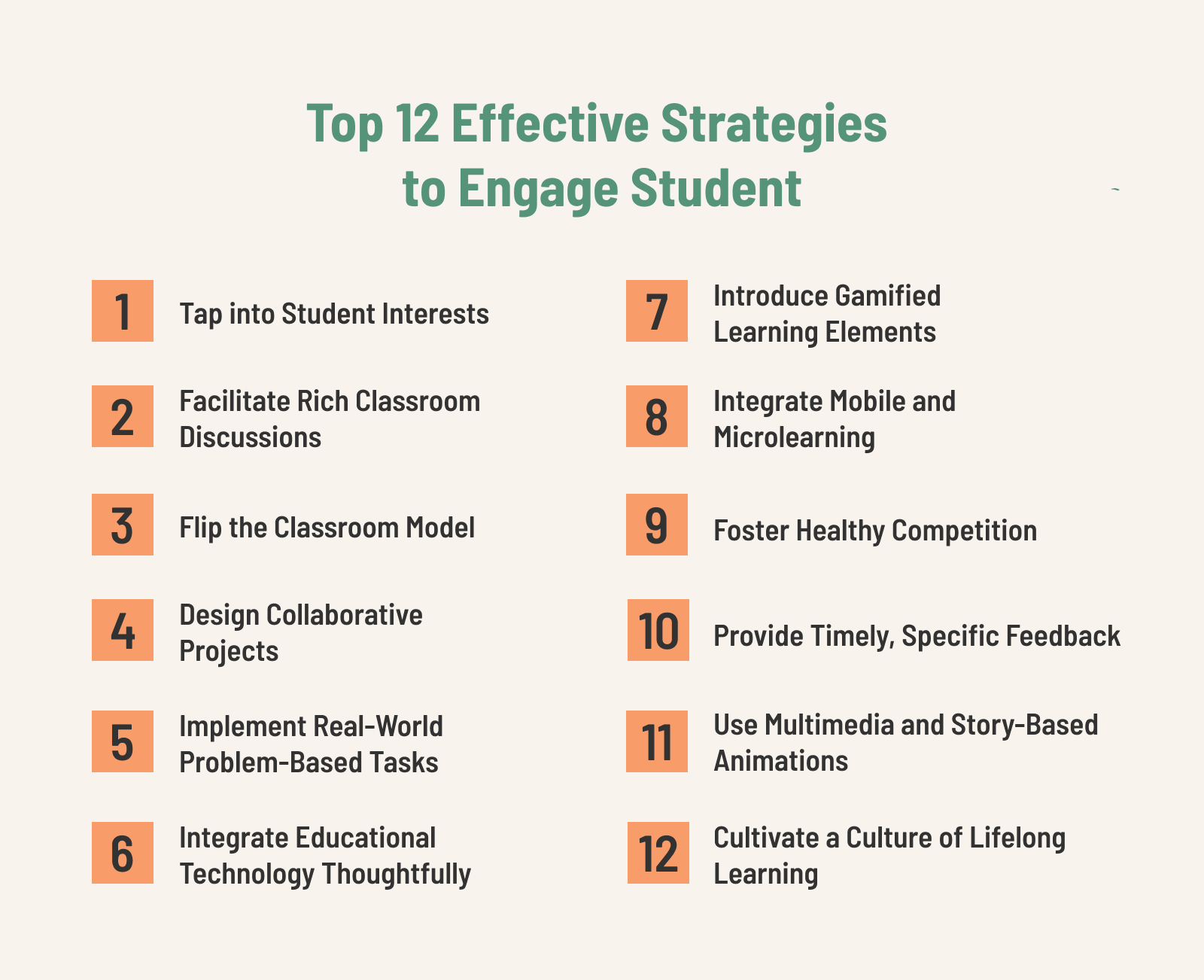

12 Strategies to Improve Learner Engagement

These strategies are organized by their primary context of application. Most can be adapted across corporate training, higher education, and online learning platforms — but the implementation details differ.

1. Connect Content to the Learner’s Actual Role

When learners cannot see how a topic connects to what they do every day, motivation drops. The fix is not adding a relevance statement at the start of a module — it is building content around authentic scenarios drawn from the learner’s actual context.

For a sales team, this means case studies from their industry and objection-handling exercises using real product situations. For a compliance program, this means scenarios that reflect the decisions learners actually face, not generic examples. For a university course, this means projects that produce something useful, not just assessable.

“The most important single factor influencing learning is what the learner already knows.” – David Ausubel, educational psychologist.

2. Structure Peer Interaction, Not Just Content

Peer interaction improves retention, creates accountability, and surfaces the kind of contextual knowledge that no course content can fully capture. But in online programs, peer interaction does not happen automatically — it requires design.

Structured peer review assignments, discussion prompts with a clear participation window, cohort-based progress dashboards, and small-group breakout tasks all create the conditions for genuine peer learning. When peer interaction is optional, most learners skip it. When it is built into the completion criteria, participation rates rise significantly.

3. Flip the Delivery Model

The flipped model moves information delivery outside the session — through a short video, a reading, or a podcast — and uses synchronous time for application, discussion, and problem-solving. For instructor-led programs, this shift allows facilitators to spend session time coaching rather than presenting.

For self-paced eLearning, the equivalent is front-loading conceptual material in short micro-modules and then presenting application exercises and case-based assessments that require learners to use what they have absorbed. The ratio of content to application is the variable worth optimizing.

4. Design Collaborative Projects with Clear Roles

Nothing generates engagement like working toward a shared goal with clear individual accountability. When team tasks are structured so that each member has a defined role — researcher, analyst, presenter, reviewer — no one can coast, and everyone has a reason to show up.

“When everyone has a clear role — researcher, document designer, or presenter — each student can pitch in in a way that actually matters. These collaborative learning moments aren’t just fun; they teach communication, empathy, and leadership. And when a team pulls off a win together, engagement just clicks.” — Olha Turutova, Head of Instructional Design & e-Learning Content, Raccoon Gang

Instructional design services can structure these activities within your LMS so that role assignments, submission workflows, and peer review happen inside the platform — reducing coordination overhead and keeping the learning loop tight.

5. Use Real-World Problem-Based Tasks

Problem-based learning assigns learners an authentic challenge that mirrors the complexity of real work: diagnose a process inefficiency, propose a product improvement, model a business scenario, design a communication plan. The task is the learning, not a vehicle for delivering pre-packaged content.

When learners see that their work produces something real — even in simulation — engagement climbs. The stakes feel different when the scenario is drawn from actual organizational context rather than a textbook example.

6. Integrate Technology Where It Reduces Friction

Technology improves engagement when it removes barriers and adds interactivity — and creates friction when it is complex, slow, or mismatched to the learning context. The question is not which tools are available but which tools reduce the gap between learner and learning.

Interactive simulations, branching scenarios, knowledge checks embedded in video, and collaborative digital workspaces all add genuine value when matched to the right learning objective. When tech is added for novelty rather than function, learners notice and disengage faster than with static content.

Online course development services at Raccoon Gang evaluate technology choices against learning objectives — not the other way around.

7. Introduce Gamification Tied to Learning Goals

Game mechanics — points, badges, leaderboards, challenge structures — tap into intrinsic motivation when they are tied to genuine learning progress rather than activity volume. A badge for completing a module rewards compliance. A badge for demonstrating a skill in a scenario-based assessment rewards competence.

When a nationwide educational organization moved online, Raccoon Gang built a gamified platform on Open edX using RG Gamification. In four months, the 11-developer team rolled out badges, challenges, and leaderboards tied to lesson objectives. Learners tracked progress on dashboards and received instant feedback. The result: over 7,000 active users, 5,000 new sign-ups in three months, and 80% of visits showing high engagement — with a tenfold increase in issued certificates.

The key design principle: every game mechanic should be traceable to a learning outcome. If it cannot be, it is decoration.

8. Deliver Learning in Mobile-Optimized Microlearning

More than half of learners in most corporate programs access content from a mobile device — often between tasks, during commutes, or in short windows throughout the workday. If the platform is not optimized for mobile, or if content requires sustained desktop attention to complete, a significant portion of the audience is effectively excluded.

Microlearning — modules of five to fifteen minutes with a single, clear objective — fits the reality of how people learn on the job. It reduces cognitive load, supports spaced repetition when sequenced deliberately, and produces completion rates that longer formats cannot match.

When Axim Collaborative needed learners to access content on the go, Raccoon Gang used Open edX services to build a custom mobile app with offline access. Push notifications brought learners back to three-question challenges during breaks. Progress bars showed daily streaks. Engagement held steady across a distributed learner population.

9. Build AI-Powered Personalization into the Learning Path

In 2026, AI has moved from a feature mention to a functional layer in effective learning environments. For learner engagement specifically, AI contributes in four ways that manual design cannot replicate at scale.

Personalized content recommendations analyze each learner’s role, assessment results, and past behavior to surface what to learn next — replacing the static course catalog with a dynamic, prioritized view. Conversational AI tutors give learners a way to ask questions, get explanations, and work through concepts between instructor touchpoints — without waiting for a human to respond. Open edX AI Tutor, currently in active community development, is one example of this capability being built into the platform rather than layered on top.

Adaptive learning paths adjust the sequence and depth of content in real time based on performance. Learners who demonstrate mastery move forward. Learners who struggle are routed through additional examples before continuing. At UCLA, introducing adaptive learning into a key course sent median exam scores from 53% to the 72–80% range, while average attrition dropped from 43.8% to 13.4%. Female student dropout rates fell from 73.1% to 7.4%.

Predictive analytics flag learners at risk of disengagement before they drop off — based on login patterns, time-on-task, and assessment attempt behavior — giving L&D teams a window to intervene before the dropout becomes a statistic.

For organizations evaluating Open edX, Raccoon Gang’s AI integration services support both native AI tutor deployment and custom AI-powered recommendation and analytics systems built on the platform’s API layer.

10. Foster Structured Competition

Competitive structures — quiz tournaments, timed challenge sprints, team-based leaderboards — activate engagement through social comparison and collaborative accountability. The design principle is that success should depend on effort and skill, not just speed, so that learners invest in genuine preparation rather than gaming the format.

Team-based competition is particularly effective in corporate training: it creates accountability to peers, mirrors the collaborative dynamics of actual work, and generates the kind of debrief conversations where knowledge transfer happens.

11. Close the Feedback Loop Quickly

Learners who receive feedback within 24–48 hours of an assessment retain the context that makes feedback useful. Learners who wait a week have moved on — the feedback arrives as a verdict on the past, not guidance for the next attempt.

Automated feedback on knowledge checks, rubric-based peer review, and AI-assisted grading all reduce the feedback gap without proportionally increasing instructor workload. The goal is specific, behavioral feedback — what the learner did, what the correct approach looks like, and what to do differently — not a score and a grade.

RG Analytics surfaces assessment attempt patterns and feedback response rates at the cohort level, so L&D teams can identify where the feedback loop is breaking down before it affects completion.

12. Cultivate a Culture of Continuous Learning

Individual engagement strategies work better when the organizational context supports continuous learning — when managers reference training in performance conversations, when time for learning is protected rather than squeezed, and when completing a program is connected to a visible career outcome.

This is not an instructional design problem — it is a change management problem. But L&D teams can influence it by designing programs that produce outcomes stakeholders can see: shorter onboarding times, higher assessment scores, lower error rates, and promoted participants. When training is connected to results, the culture around it shifts.

How to Measure Learner Engagement

Completion rates are a starting point, not a destination. Measuring learner engagement means tracking the behaviors that predict learning outcomes — not just the outputs that satisfy a compliance checkbox.

The metrics that matter:

- Time-on-task by module — compares active learning time on interactive content versus passive reading or video consumption; reveals where learners are actually engaging versus skimming

- Assessment attempt patterns — a high retry rate on a specific assessment indicates either a content difficulty problem or a psychological safety issue; both are actionable

- Discussion forum participation rate — a proxy for social engagement and perceived community; low rates often precede elevated dropout

- Drop-off point analysis — identifies the specific module or moment where learners leave the course path; the most actionable engagement metric for content improvement

- Completion rate of optional enrichment content — bonus videos, advanced exercises — a reliable signal of genuinely engaged versus minimally compliant learners

- Peer collaboration activity — shared document edits, group chat participation, peer review submission rates — measures the social learning layer

- Post-training performance indicators — where the program has a defined business outcome, this is the metric that matters most to stakeholders

Embed short pulse surveys — two to three questions — at critical course milestones to capture self-reported engagement before it shows up in behavioral data. Implement automated alerts for learners who have not logged in for a set number of days, followed by personalized re-engagement messages rather than generic reminders.

Harvard, Arizona State University, and the University of Washington have all worked with Raccoon Gang to refine their continuing education offerings, using RG Analytics to monitor learner interaction with microlearning modules and live webinars. At UCLA, analyzing collaboration logs and engagement rates on specific modules gave the instructional team the data needed to restructure content before the next cohort — producing the attrition and exam score improvements documented above.

Custom LMS dashboards built on Open edX can surface drop-off points, participation trends, and at-risk learner flags in a single view — giving L&D teams the visibility to act before engagement problems become completion failures.

The Final Word

Learner engagement is a design problem, not a motivation problem. When the content is relevant, the feedback is timely, the platform works on the device the learner actually uses, and the analytics give L&D teams visibility into what is happening, completion rates rise, and knowledge transfer improves.

There is no universal playbook. The right combination of strategies depends on your learner population, your content domain, and your organizational context. But the patterns are consistent: relevance, structure, feedback, and social accountability produce engagement that self-directed motivation alone cannot sustain.

If you are working to improve learner engagement in a corporate training program, a university course, or an online platform — and want to see what the right combination of instructional design, platform configuration, and analytics looks like in practice — Raccoon Gang can help.

FAQ

How do you improve student engagement in corporate training?

What LMS features most directly support learner engagement?

How does gamification improve learner engagement?

What is the difference between student engagement and learner engagement?

How can AI improve learner engagement in eLearning?

- Challenges in Improving Learner Engagement

-

12 Strategies to Improve Learner Engagement

- 1. Connect Content to the Learner's Actual Role

- 2. Structure Peer Interaction, Not Just Content

- 3. Flip the Delivery Model

- 4. Design Collaborative Projects with Clear Roles

- 5. Use Real-World Problem-Based Tasks

- 6. Integrate Technology Where It Reduces Friction

- 7. Introduce Gamification Tied to Learning Goals

- 8. Deliver Learning in Mobile-Optimized Microlearning

- 9. Build AI-Powered Personalization into the Learning Path

- 10. Foster Structured Competition

- 11. Close the Feedback Loop Quickly

- 12. Cultivate a Culture of Continuous Learning

- How to Measure Learner Engagement

- The Final Word